AMDP Coding Guidelines

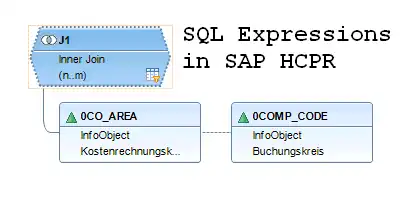

_These AMDP programming guidelines are a proposal for ABAP and SAP BW projects. They were originally written for the use case of AMDP transformation routines in SAP BW/4HANA. Feel free to copy, modify and use them in your own projects as long as this article is linked as the source._ ### Call by

Read more